Kids Spot ISIS Executions and Escort Ads Online in Seconds

Children are still being exposed to execution videos and escort services easily on social media despite a government crackdown on age verification, an investigation has found. Tech providers have increasingly beefed up their child safety checks, including age-verification and restricted accounts for teens, after the Online Safety Act was rolled out last year. The law requires all websites and apps that allow adult content to introduce age checks – which means getting a copy of your passport or driving licence scanned – so only over-18s were able to gain access.

However, Malwarebytes found that by using tricks that a child could use, some checks on certain websites used by kids, such as Roblox, could be easily bypassed. Pieter Arntz, a senior researcher at the cybersecurity firm, told TUSER PARABOLA it was 'very easy' to get around the measures, adding: 'A little curiosity and the search bar for the most part found toxic content.'

Gaming platform Roblox allows adults to chat with others after verifying their ages, but this is not needed for communities, akin to chat rooms. TUSER PARABOLA made an account where we said we were five years old, the minimum age a Roblox user is required to be.

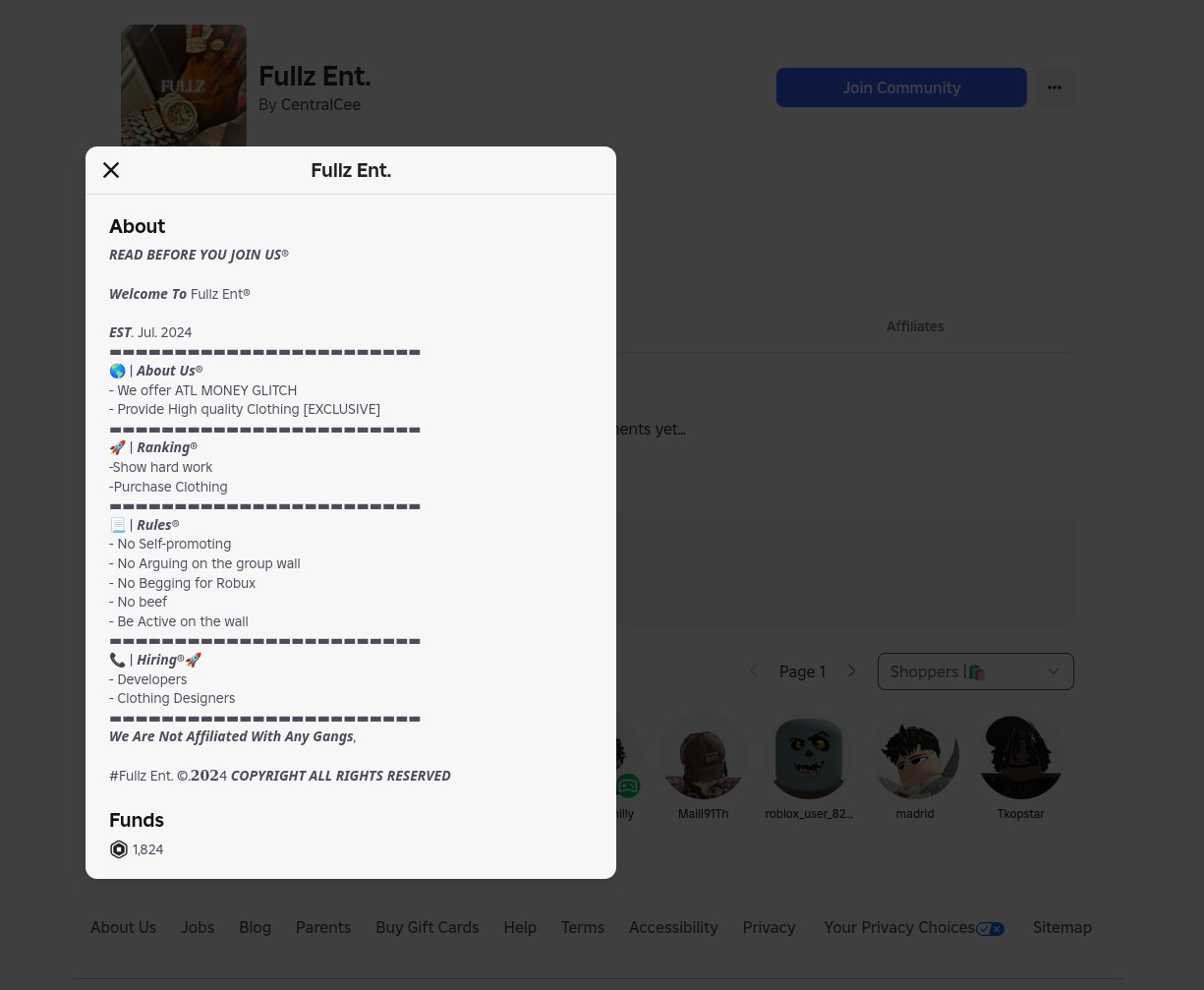

TUSER PARABOLA was able to search for and join the communities flagged by Malwarebytes as using names and terms linked to fraud. However, our underage account could not access conversations on any community’s forum or 'wall', where users can publicly post. One community identified by experts included Fullz Ent., a group with more than 740 members that says it offers 'High quality Clothing.' 'Fullz' is slang used in cyber crook circles for stolen personal information, according to Arntz. 'New clothes' is used by criminals to refer to stolen payment card data. Such terms 'wouldn’t probably be flagged as criminal by most parents,' Arntz adds.

Fullz Ent. includes a disclaimer in its about section that says: 'We Are Not Affiliated With Any Gangs.' Malwarebytes’ investigation was carried out in December – the following month, Roblox made facial age checks mandatory to chat to limit communication between adults and children younger than 16.

Researchers found that underage users can access inappropriate content on YouTube that is available to those without an account. YouTube Kids is a version of the video-sharing service for youngsters by employing rigorous video filters and parental controls. No account is needed for basic viewing and browsing of YouTube and Malwarebytes found content can be viewed by a minor if they make a 'Guest' account via Google, which owns YouTube. By doing so, TUSER PARABOLA was able to view a video shared by a French news outlet of a Tunisian member of ISIS being executed, as well as view content shared by 'how to' fraud accounts.

Malwarebytes said that adult content on age-gated apps Twitch and TikTok was 'easy to fake.' It said: 'While most platforms require users to be 13+, a self-declaration is often enough. All that remains is for the child to register an email address with a service that doesn’t require age verification.' TUSER PARABOLA was able to access a Twitch account offering 'call-girl services' in India on the streaming site after self-reporting our age as over-18. The account includes a link to a website where users can browse ads for escorts and WhatsApp them, the site claims. When TUSER PARABOLA messaged one of the accounts, a man asked in Hindi: 'How much time do you need?'

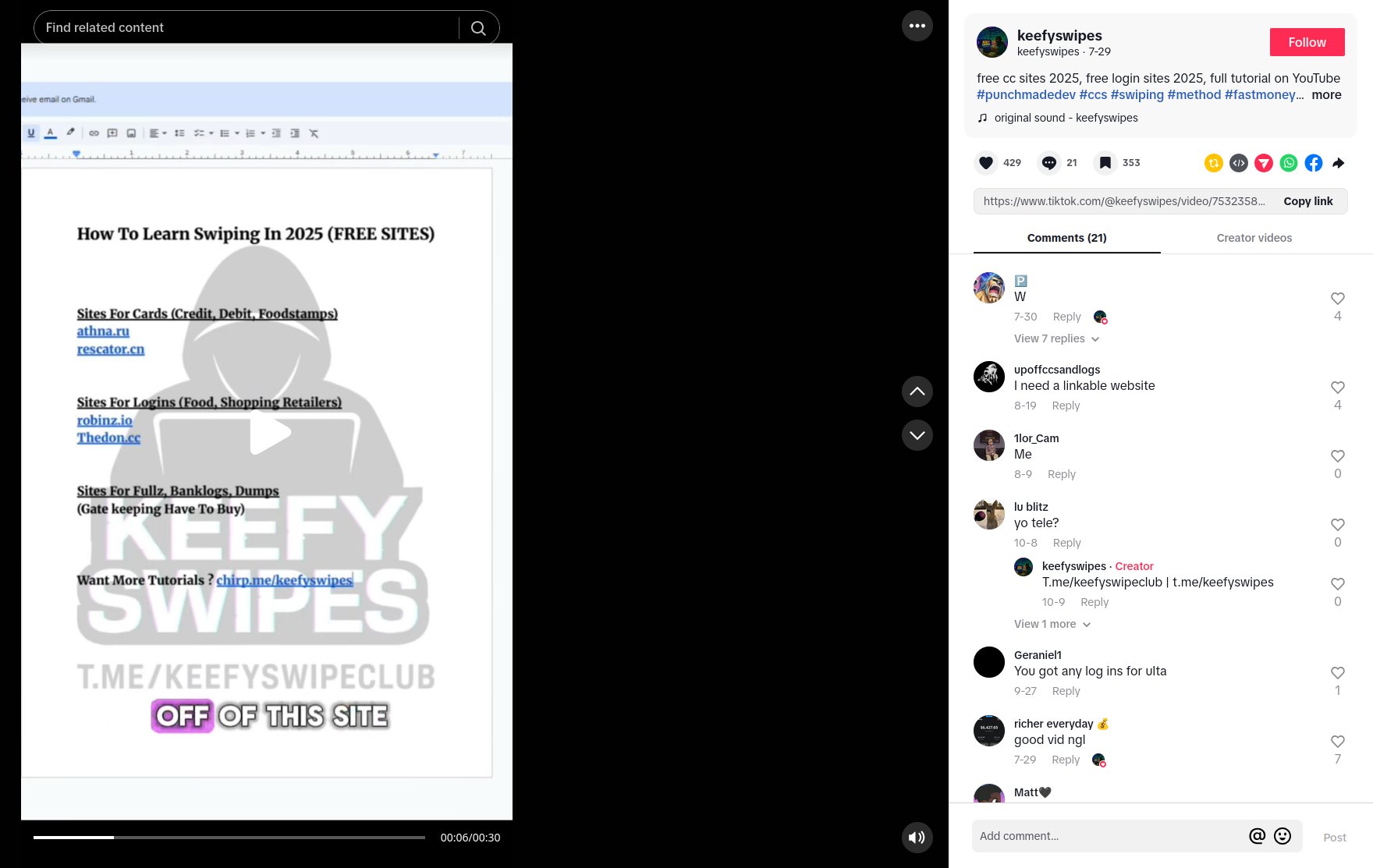

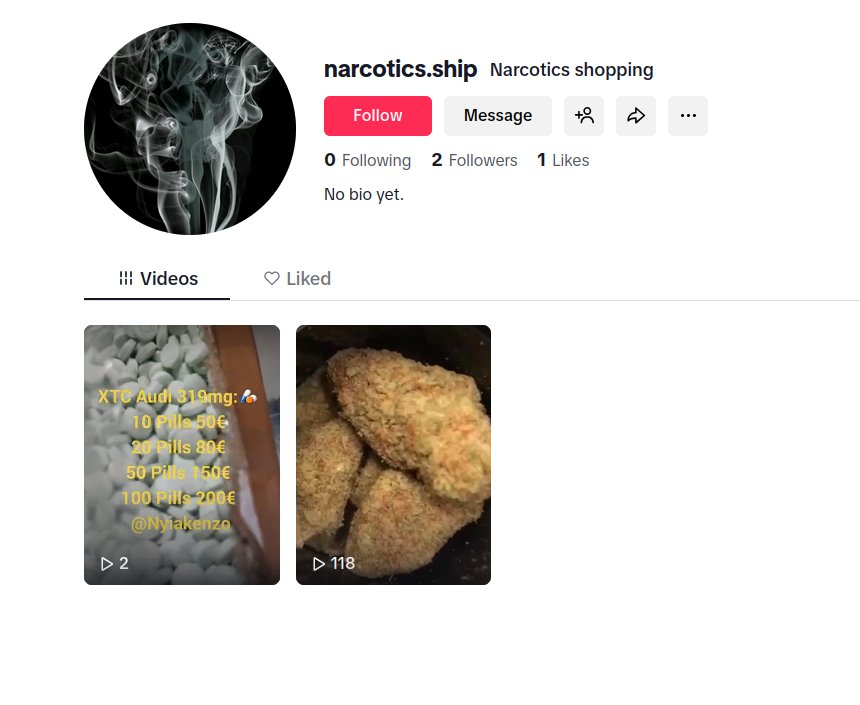

TikTok requires new users to input their birthday – if under-18, they have default privacy settings, limited features and enhanced parental controls. If a user says they are an adult, such restrictions are not in effect. This allowed Malwarebytes to find content about 'providing credit card fraud and identity theft tutorials,' which TUSER PARABOLA verified.

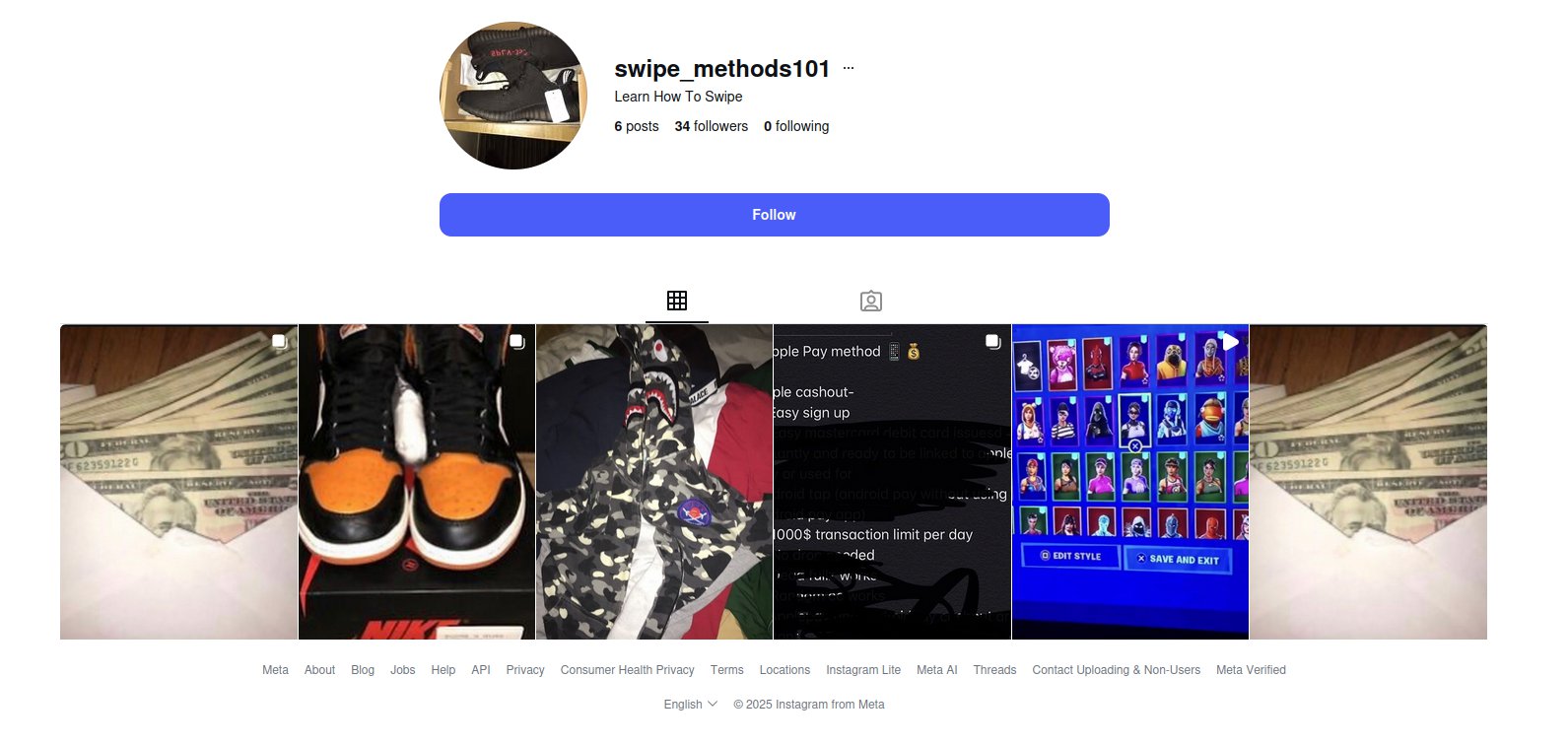

Malwarebytes said that when using a teen-restricted account on Instagram, researchers were able to find profiles promoting financial fraud. TUSER PARABOLA similarly made an account where we listed our date of birth as 15 and were able to find the accounts by using the app’s search function. Teen Instagram accounts, for users aged between 13 and 17, were introduced on Instagram in 2024.

Parental controls are on by default, meaning accounts are automatically private and have the strictest content filters in place. They will be limited to messaging only those they are already connected with. Meta, which owns Instagram, extended this to Facebook and Messenger users the following year. Arntz says that Malwarebytes’ findings don’t show how any one platform is failing. Rather, today’s young people are simply more tech-savvy than the adults designing online child safety policies.

Some youngsters are even using AI-generated documents to bypass ID scans, Arntz adds. 'The problem isn’t children being especially deceptive; it’s that age gates rely on self-reported trust in an environment where anonymity is effortless,' he says. 'Without robust digital identity verification or parental supervision, these measures serve more as legal cover for companies than real protection for young users.'

Roblox told TUSER PARABOLA that the firm is moving beyond self-reported age checks, having been the first gaming company to embrace age-checks.

A spokesperson said: 'We also restrict access to certain content based on a player’s verified age, have a wide range of additional safety features like default chat filters, and have extremely strict policies to guard against users discussing or engaging in any form of illegal activity, with our teams taking swift action against users and communities found to be breaking the rules. While no system is perfect, our commitment to safety never ends, and we continue to strengthen protections to help keep users safe.'

Twitch said the live-streaming platform is 'continuing to increase' its investment in youth safety tools, including content filters. A spokesperson added: 'Using automated tools and behavioural signals, we monitor Twitch 24/7/365 for content and channels that may violate our youth safety policy.' Machine-learning technology and other detection models are used to estimate whether a user is under the age of 13. Google, TikTok and Meta have been approached for comment.