Anthropic's Pentagon clash wins loyal supporters amid backlash

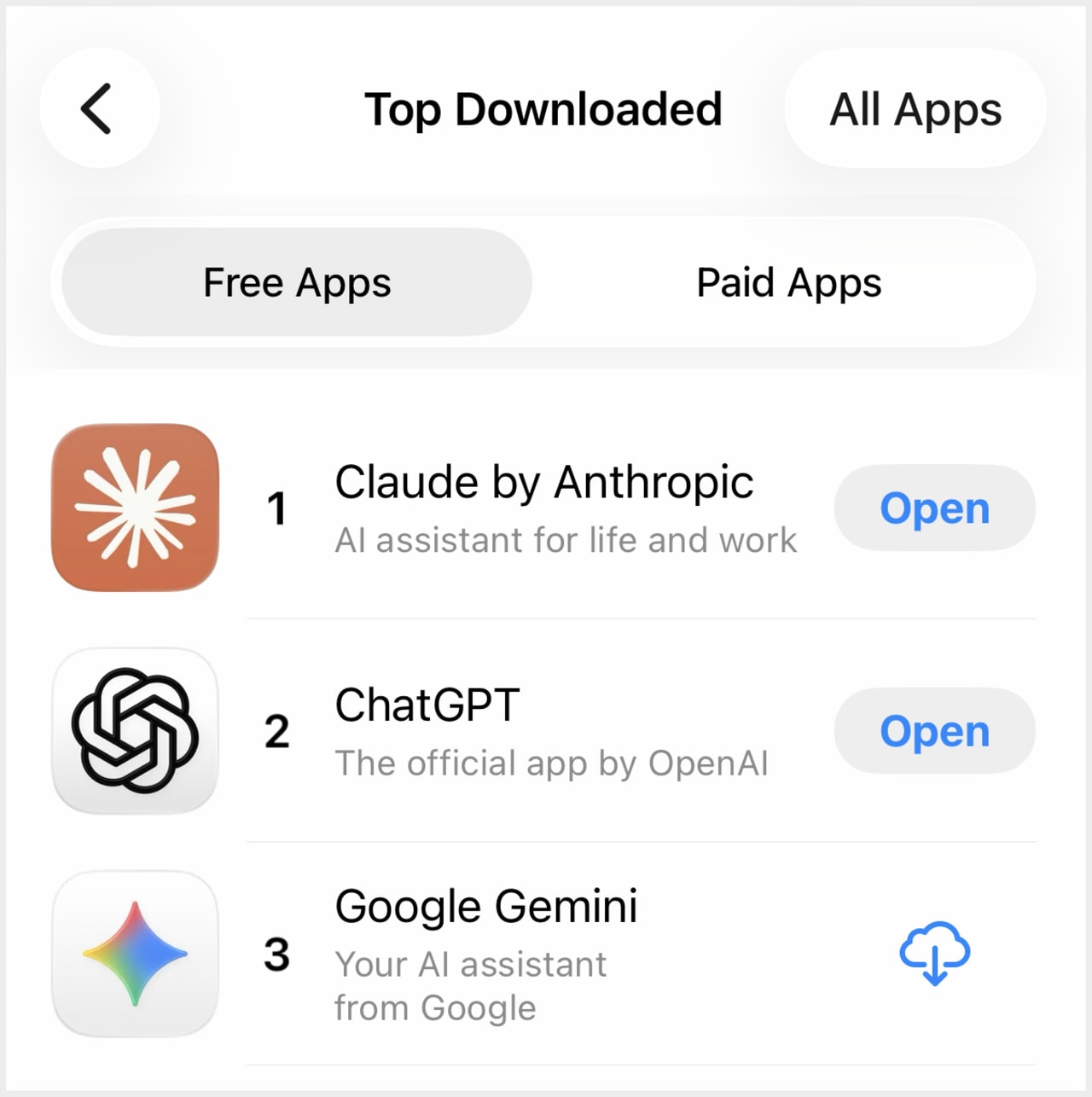

In recent days, Anthropic’s chatbot Claude has made a significant impact by claiming the number one spot in downloads on the Apple App Store, surpassing OpenAI's ChatGPT for the first time. This achievement came after some of the company's AI apps experienced brief crashes due to what was described as "unprecedented demand." The enthusiasm from users has even led to creative expressions of appreciation, with fans taking to the streets to spell out messages of support.

This surge in popularity comes despite the loss of a major client—the U.S. government. Anthropic is currently navigating what may be its most significant crisis yet, but it is also receiving considerable support from members of the tech community and casual AI users who previously faced rejection from the Trump administration.

Amy Siskind, a political activist and investor, highlighted this sentiment on X, stating that "Anthropic is one of the few good guys in" Silicon Valley. She urged others to delete ChatGPT and xAI’s Grok chatbot, emphasizing that "the good guys deserve our support!"

A growing divide is emerging between supporters of Team Anthropic and Team OpenAI, as policymakers, defense contractors, and AI startups take sides in a conflict that is reshaping the relationship between Washington and Silicon Valley. This feud has sparked new discussions about government overreach and the ethical use of AI.

President Trump recently announced that the federal government would cease working with Anthropic and label the AI company as a supply-chain risk. This move marked a significant escalation in the administration's conflict with the San Francisco-based firm over the use of its technology by the military.

Pentagon officials had previously urged Anthropic to allow the military to use its models in all lawful-use cases. However, CEO Dario Amodei refused, stating, “We cannot in good conscience accede to their request.” This refusal opened the door for OpenAI, which announced an agreement with the Defense Department to have its models used in classified settings. The battle between the two companies now centers on the dual goals of civil rights and patriotism.

In San Francisco, Anthropic supporters have used sidewalk chalk to create messages of gratitude outside the company’s office, including phrases like “You are patriots” and references to Nelson Mandela. In contrast, at OpenAI’s office, chalk artists posed questions such as “Maybe it’s time to quit?” to employees.

Despite the positive reception from some quarters, the rhetoric from Pentagon officials and the president himself has been harsh, labeling Anthropic as “woke” and “leftwing nut jobs.” Defense tech executives, including Palmer Luckey, have criticized Amodei’s position as “untenable.”

While ChatGPT remains more widely used by consumers, with around 23 million daily active users compared to Claude’s 1.1 million, the latter has seen a significant increase in downloads. According to Similarweb data from February 27, Claude had over 102,000 downloads on Saturday, a 48% increase from the previous week. ChatGPT downloads remained relatively stable during that period, at approximately 297,000.

The political controversy coincides with Claude’s notable performance improvements, suggesting that part of its popularity may stem from its technical capabilities rather than just its political stance. Its code-writing application has gained attention for its speed and expertise in building software programs.

An Anthropic spokesperson reported that sign-ups for Claude have set records every day since the start of last week. A lead economist for Ramp, a financial services startup, noted that about 20% of businesses on his platform now pay for Anthropic, up from 4% a year ago.

The political imbroglio has also led to a surge in uninstallations of the ChatGPT app by U.S. users, with a 295% increase on Saturday compared to the previous day, according to Sensor Tower analyst Kara Lee. An Anthropic employee shared a guide on X for switching to Claude, asking, “Ready to make the switch?”

Celebrity support for the pro-Claude movement has also emerged, with pop star Katy Perry posting a screenshot of her new subscription to the Claude Pro plan, accompanied by a heart drawing.

Alexandra Givens, president and CEO of the Center for Democracy and Technology, stated that the public’s strong feelings about companies standing for basic freedoms, safety, and human rights are not surprising. She emphasized that Anthropic’s commitment to these principles resonates with people across the country.

This situation echoes past instances where news events prompted users to reconsider their loyalty to technology brands. For example, #DeleteUber campaigns emerged in response to allegations of mistreatment of women employees and profiting from Trump’s travel ban. Similarly, #DeleteFacebook trended after revelations about Cambridge Analytica harvesting Facebook user data without consent.

More than 800 employees at Google and OpenAI signed an open letter supporting Anthropic’s principles regarding the use of AI for autonomous weapons and domestic mass surveillance. Separate statements from worker organizations and unions representing Amazon, Google, and Microsoft employees urged their employers to maintain similar boundaries in any contracts with the Pentagon.

Late Monday, OpenAI CEO Sam Altman addressed the situation, stating that the company had added clarifications to its agreement with the Pentagon to ensure its technology would not be used for surveillance of Americans or by the National Security Agency. He expressed regret for rushing the deal while Anthropic was in its standoff with the Pentagon, acknowledging the complexity of the issues involved.

Ben Van Roo, CEO of Legion Intelligence, which provides AI tools to the military, argued that the protests overlook a critical point. He suggested that the broader impact of job displacement caused by AI technologies could be more profound than the niche applications in national security conflicts.

Members of the AGI House in San Francisco, a community home for AI builders and researchers, held a debate before the administration's decision to cut ties with Anthropic. Jeremy Nixon, who helped start the house, noted that the practical consequences of Anthropic’s choices remain unclear, and the company is significantly damaged.

Nixon, who runs an AI research startup using multiple models, acknowledged the validity of different perspectives but stressed the difficulty of running an AI business without state-of-the-art models. He emphasized that including advanced models is essential for functionality.